When hosting your online business on a platform, it's essential that your app remains functional under high load. While the Vercel platform handles billions of requests each week, we do understand that you may wish to perform a load test. Read on to learn about Vercel's policies for planning and executing load tests.

WARNING: Load testing is only permitted on Enterprise plans. If you wish to perform a load test prior to starting an Enterprise contract, this must be discussed with the sales manager responsible for your account.

If no prior warning is given before conducting a load test, you are likely to be in breach of the fair usage policy. In these cases it is likely that the IP addresses being used to perform the load test will be blocked shortly after starting due to abnormal traffic patterns.

Load testing is the process of determining whether your site can handle a large number of visitors when live by simulating multiple requests to it.

The Vercel platform was built to provide teams with peace of mind when launching their websites. As a Serverless and CDN solution, Vercel is highly scalable out of the box and doesn't require human intervention to adjust server instances or load balancers. See the Serverless Functions documentation to learn more.

As an Enterprise customer, one of your most important perks is access to our Solutions team. The Solutions team is made up of Next.js experts who can help with architecture choices and identify possible points of failure. You can reach out to the team before performing any load tests. It is important to certify that your upstream providers can handle the load and that your functions are as performant as possible.

The bottleneck of your application is likely to be one of the APIs, Databases, CMSes, or data storage used in server-side code. So, load-testing those resources directly can give you a good picture of the state of your deployment.

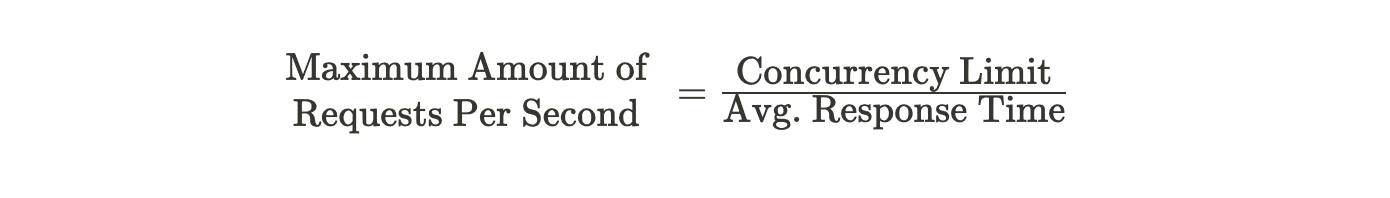

Assuming your backend can. If your Serverless Functions are returning in 200ms, your application is able to handle up to 5000 requests per second in a single region. To calculate this number, take the following equation:

The formula above represents the maximum number of requests per second for Serverless Functions in a single Vercel region. Note that the concurrency limit on Hobby and Pro plans is 30,000 and 100,000 on Enterprise.

Your functions' average response time is generally responsible for your application's reduced throughput, which is why it is vital to test upstream providers so they can reply to calls as fast as possible.

According to the equation, if the average response time increases given a constant concurrency limit, the maximum number of requests per second will decrease proportionally.

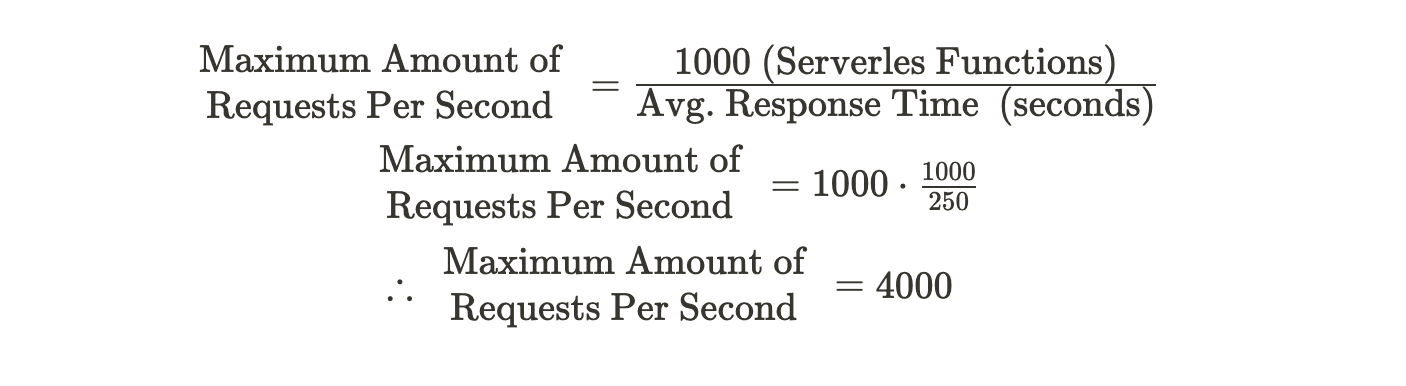

As an example, take a static landing page that calls an authentication API route a single time in the path /api/auth. The authorization function is a little slow and returns, on average, in 250ms. Using an example of 1000 connections and assuming the function was deployed to a single region, you would have the following result:

This means the website could support approximately 4000 new visitors each second if those visits are triggering Serverless Functions. If 5000 visitors access the SSR page in the same second, it is possible that a thousand of them will see a failure from /api/auth.

There are a number of ways to expand the capacity of your Serverless Functions:

- Caching: If the data returned by the API or page can be cached and is public, consider adding a cache-control header to the response. Please refer to the documentation on cacheable responses

- Deploying to multiple regions: Enterprise customers can deploy to multiple regions. However, you should always consider using regions as close as possible to your data source. Check our article on How to choose the best region for your deployment. If you still want to deploy to multiple regions, check out the regions configuration documentation

- Reduce Serverless Function response time: It is always best to optimize the response time of your Serverless Functions. You should always insert timeouts for database queries or API calls, and deploy functions geographically close to your data sources

New: Enterprise customers using Fluid Compute have additional considerations for load testing due to dynamic resource allocation and enhanced scaling capabilities.

Fluid Compute provides dynamic resource allocation that can significantly improve your application's ability to handle load spikes. However, this introduces new considerations for load testing:

- Warm-up period: Allow 2-3 minutes for initial scaling to reach optimal performance

- Resource monitoring: Track GB-hours consumption in addition to invocations

- Burst capacity: Test both sustained load and traffic spikes separately

- Cost implications: Fluid compute pricing differs from standard functions

- Establish baseline performance metrics before testing

- Implement gradual load increases to allow proper scaling

- Test different traffic patterns (steady, burst, gradual ramp)

- Monitor both performance and cost metrics during tests

- Validate scaling behavior meets your requirements

- Test failover and recovery scenarios

After reading the above, if you still want to proceed with load testing on Vercel, please contact your Vercel representative. They will coordinate the tests with your team, the Vercel Infrastructure team, and the Solutions team to ensure everyone is aligned.

If you have received approval to perform a load test, from either Vercel Support or your representative, you will be asked to provide the following information:

- The start and end time/date

- Estimated maximum number of requests per second

- The target hostnames

- Geographical source (e.g., AWS/GCP region)

- Source IPs (must be < 1000)

- Will the test be distributed geographically or localised?

- New: Whether you're using Fluid Compute

Once the above information is received, the infrastructure team will review it. They will then approve the request via Vercel Support or request further information if necessary.

Load tests can cause a spike in usage, both on Serverless Function invocations and third-party services used by those functions. If not handled correctly, this can cause unwanted additional usage and billing.

It is recommended to create a testing environment if possible, or logic to mock third-party responses.

Finally, check your contract and warn stakeholders that, depending on the size of the test, additional usages will occur.

All requests hitting Vercel will result in entries to the Log Drains provider of your choice. The rows stored in the provider can give you an idea of your Serverless Functions P75, all status codes returned, and error messages if they exist.