In today's day and age, it's become increasingly important to integrate AI experiences into your web application. LangChain, when combined with the power of Next.js, offers a seamless way to bring AI-driven functionalities to your applications.

Langchain is a powerful toolkit designed to simplify the interaction and chaining of multiple large language models (LLMs), such as those from OpenAI, Cohere, HuggingFace, and more. It is an open-source project that provides tools and abstractions for working with AI models, agents, vector stores, and other data sources for retrieval augmented generation (RAG).

To better understand LangChain, consider an analogy of a construction kit. In this kit, each LLM is a different type of building block with unique characteristics and abilities. LangChain, then, is the tool that allows you to connect these blocks in various ways to create complex structures. Just as you can create a wide variety of structures from a set of building blocks, LangChain allows you to create a diverse range of AI applications by chaining together different models.

In this guide, we will be learning how to build an AI chatbot using Next.js, Langchain, OpenAI LLMs and the Vercel AI SDK.

To get started, we will be cloning this LangChain + Next.js starter template that showcases how to use various LangChain modules for diverse use cases, including:

- Simple chat interactions

- Structured outputs from LLM calls

- Handling multi-step questions with autonomous AI agents

- Retrieval augmented generation (RAG) with both chains and agents

Most of these functionalities utilize Vercel's AI SDK to stream tokens to the client, enhancing user interaction.

You can check out a live demo, or deploy your own version of the template with one click.

First, clone the repository and download it locally.

Next, you'll need to set up environment variables in your repo's .env.local file. Copy the .env.example file to .env.local. To start with the basic examples, you'll just need to add your OpenAI API key, which you can find here.

Next, install the required packages using your preferred package manager (e.g. pnpm). Once that's done, run the development server:

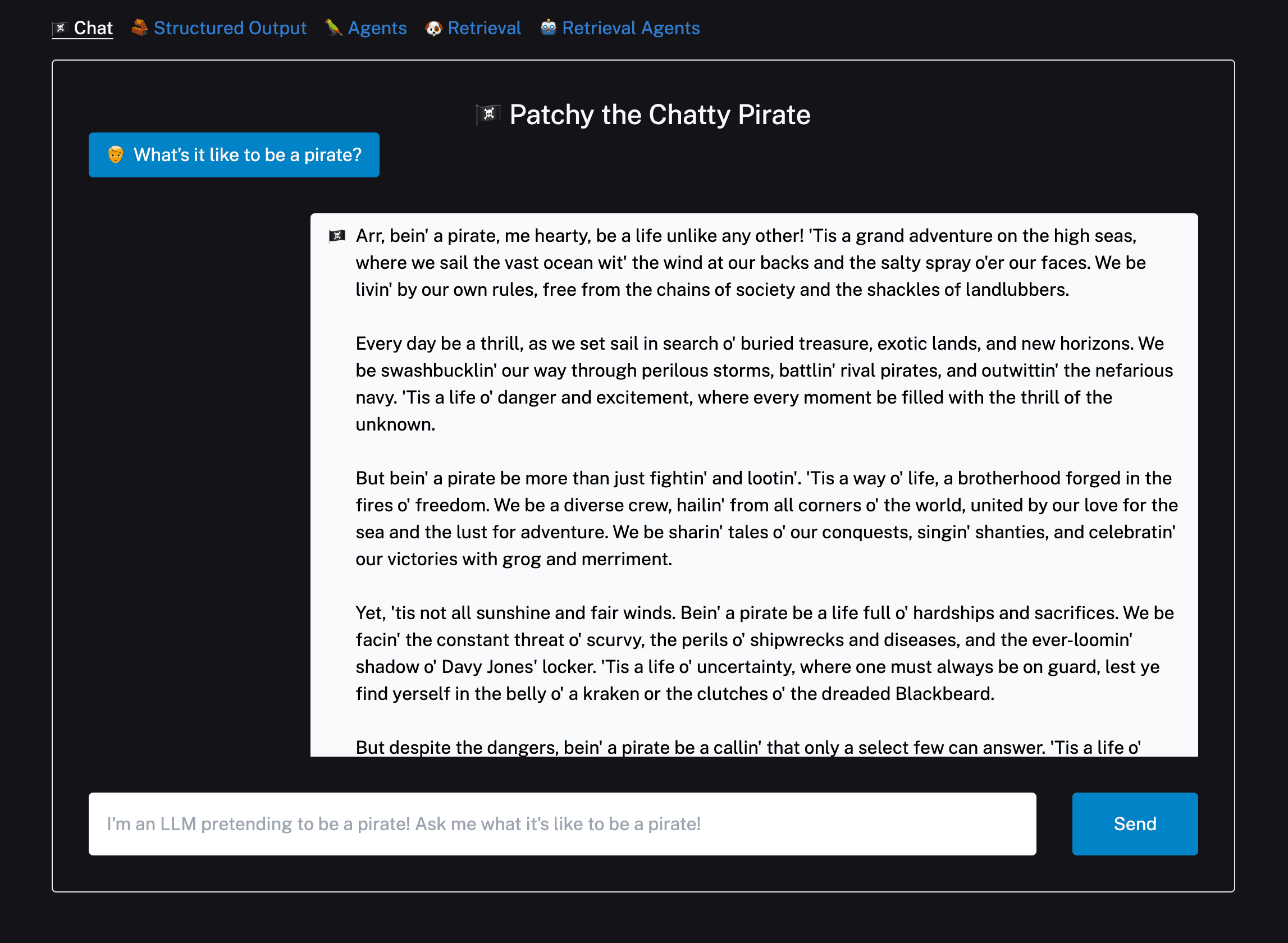

Open http://localhost:3000 with your browser to see the result! Ask the bot something and you'll see a streamed response:

You can start editing the page by modifying app/page.tsx. The page auto-updates as you edit the file.

Backend logic lives in app/api/chat/route.ts. From here, you can change the prompt and model, or add other modules and logic.

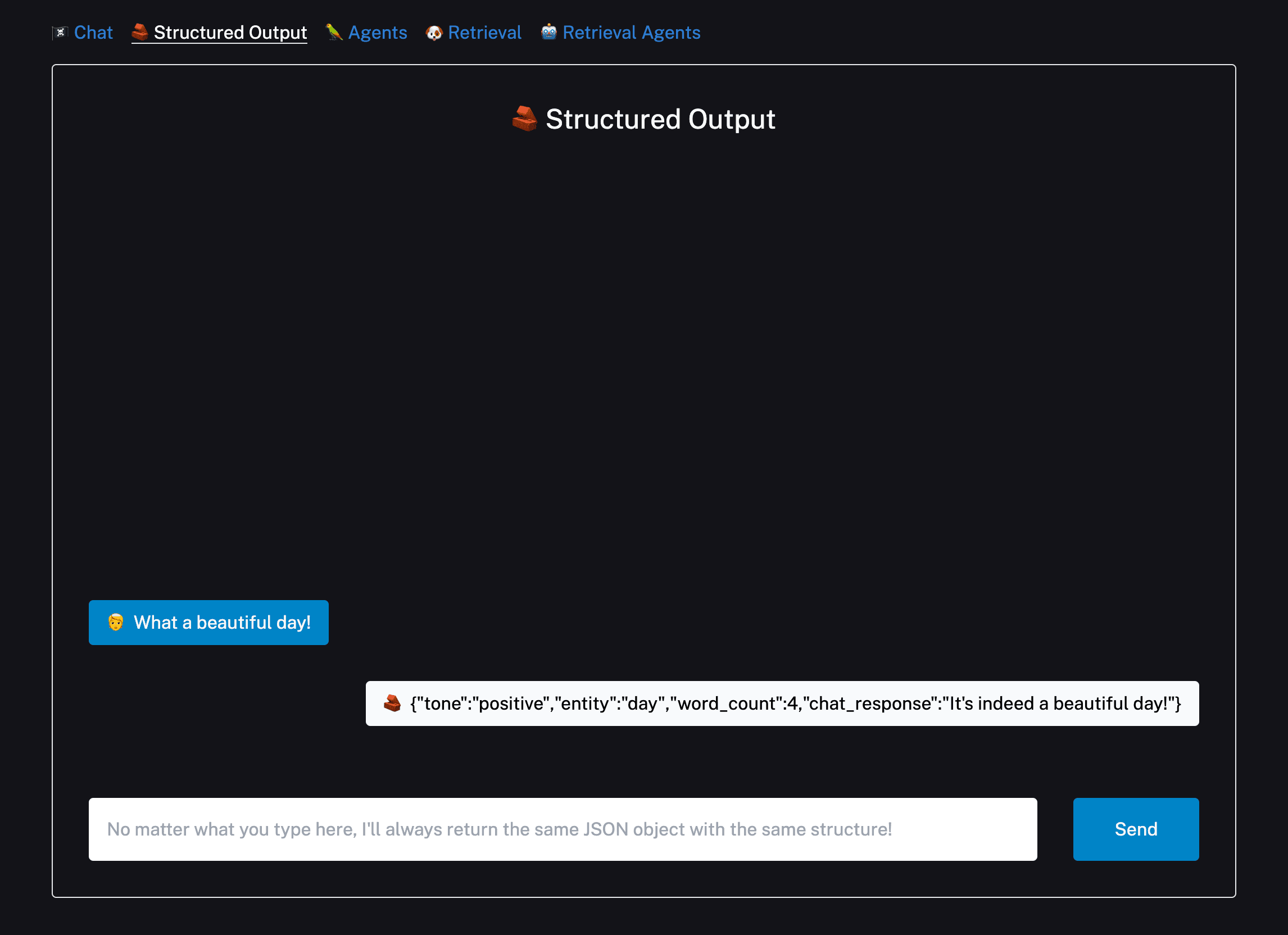

The second example in the template shows how to have a model return output according to a specific schema using OpenAI Functions.

For context, OpenAI Functions is a novel feature that allows developers to make their models more interactive and dynamic by enabling them to call functions during a conversation. Instead of just generating text based on a prompt, the model can execute specific functions to retrieve or process information, making the interaction more versatile.

Click the Structured Output link in the navbar to try it out:

The chain in this example uses a popular library called Zod to construct a schema, then formats it in the way OpenAI expects. It then passes that schema as a function into OpenAI and passes a function_call parameter to force OpenAI to return arguments in the specified format.

For more details, check out this documentation page.

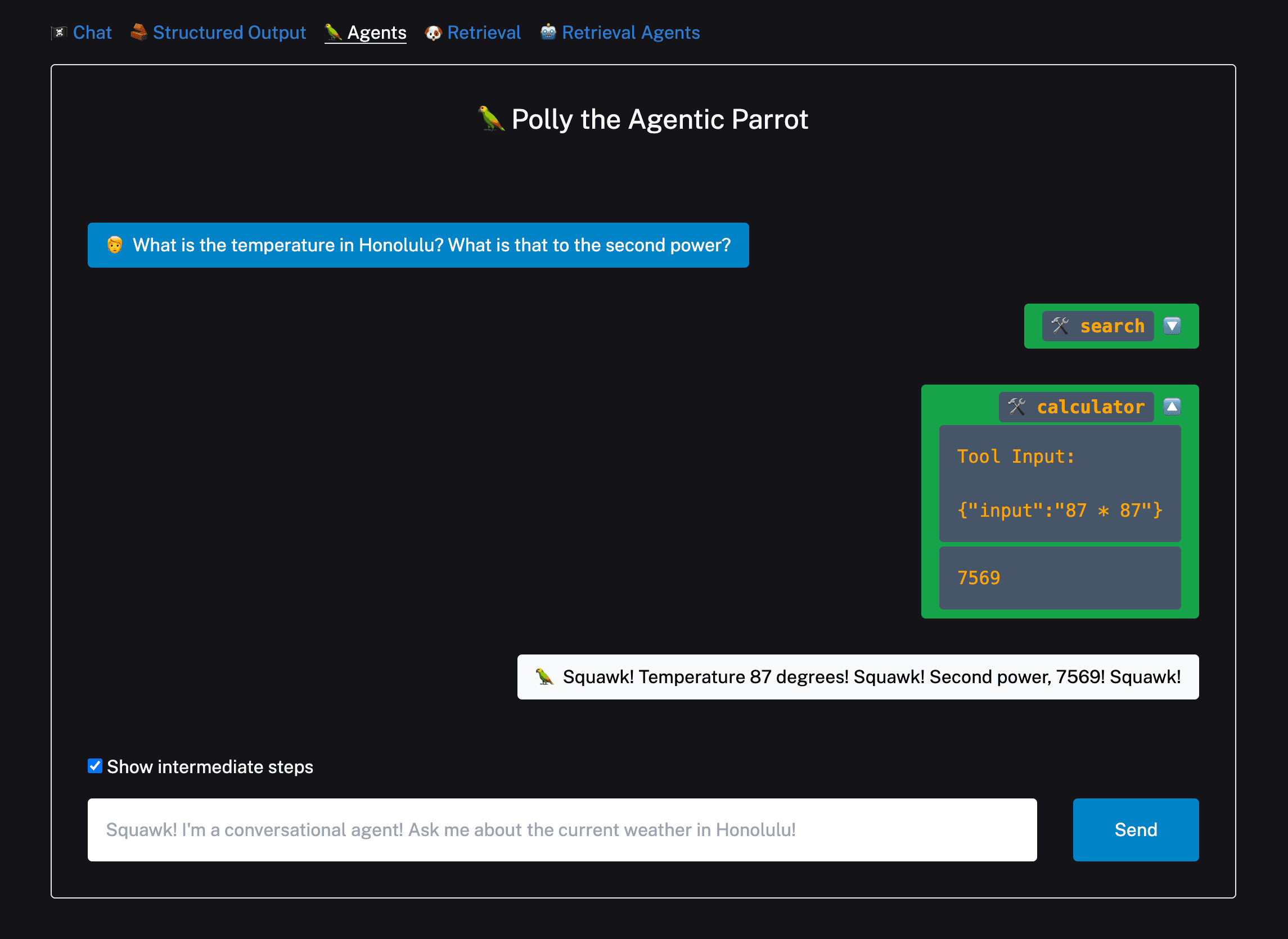

Autonomous AI agents are self-directed AI systems that can make decisions, learn from interactions, and perform tasks without constant human intervention. Leveraging the power of Large Language Models (LLMs) and real-time data, these agents can adapt to changing environments, optimize processes, and provide intelligent solutions across various domains.

To try out the agent example, you'll need to give the agent access to the internet by populating the SERPAPI_API_KEY in .env.local. Head over to the SERP API website and get an API key if you don't already have one.

You can then click the Agent example and try asking it more complex questions:

This example uses the OpenAI Functions agent, but there are a few other options you can try as well. See this documentation page for more details.

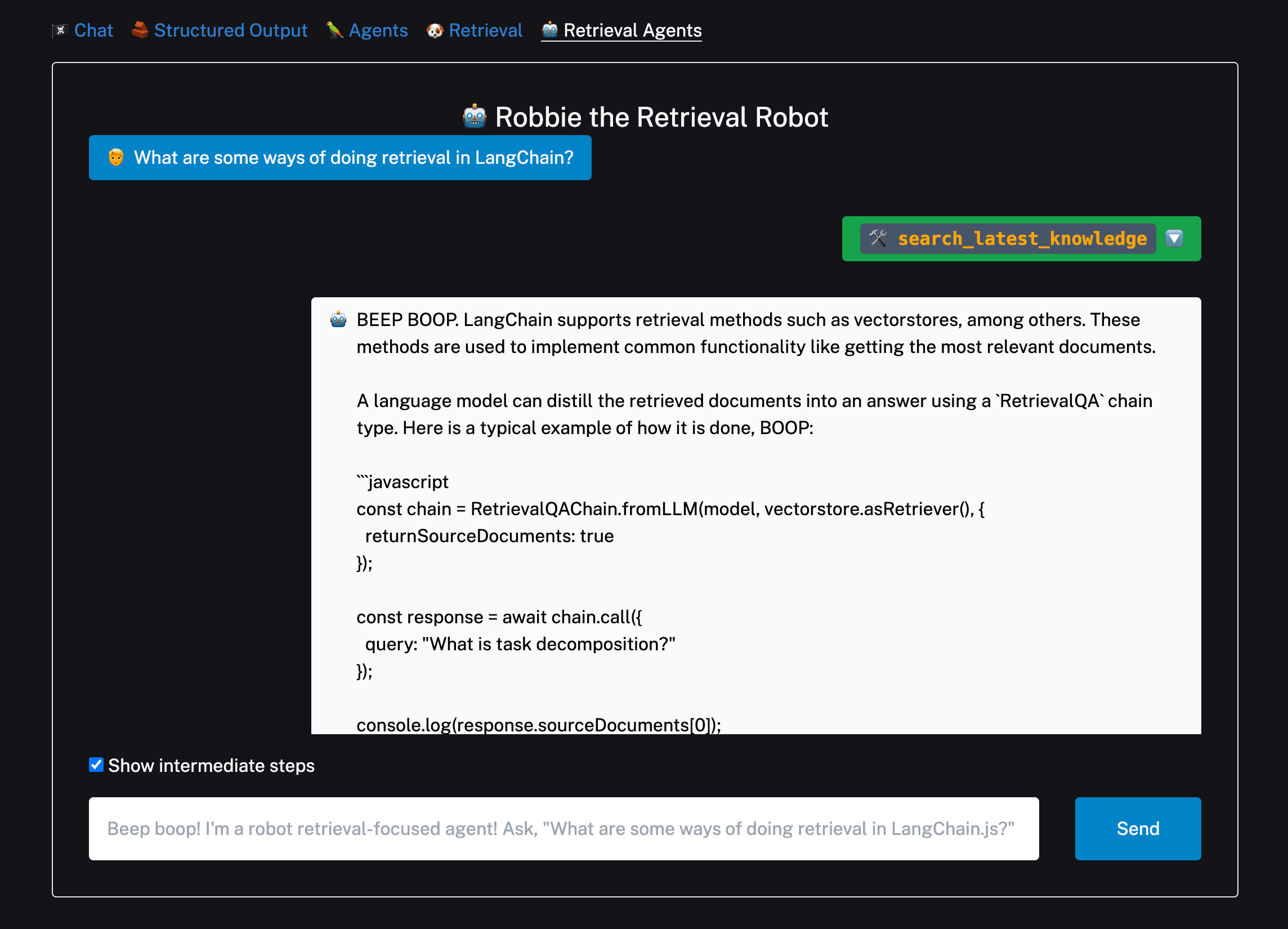

Retrieval Augmented Generation (RAG) combines the power of large-scale information retrieval – usually via vector databases – with advanced language models to answer questions using external knowledge sources. By fetching relevant content and then generating coherent responses, RAG offers more informed and contextually accurate answers than traditional models alone.

The retrieval examples both use Supabase as a vector store. However, you can swap in another supported vector store if preferred by changing the code under app/api/retrieval/ingest/route.ts, app/api/chat/retrieval/route.ts, and app/api/chat/retrieval_agents/route.ts.

For Supabase, follow these instructions to set up your database, then get your database URL and private key and paste them into .env.local.

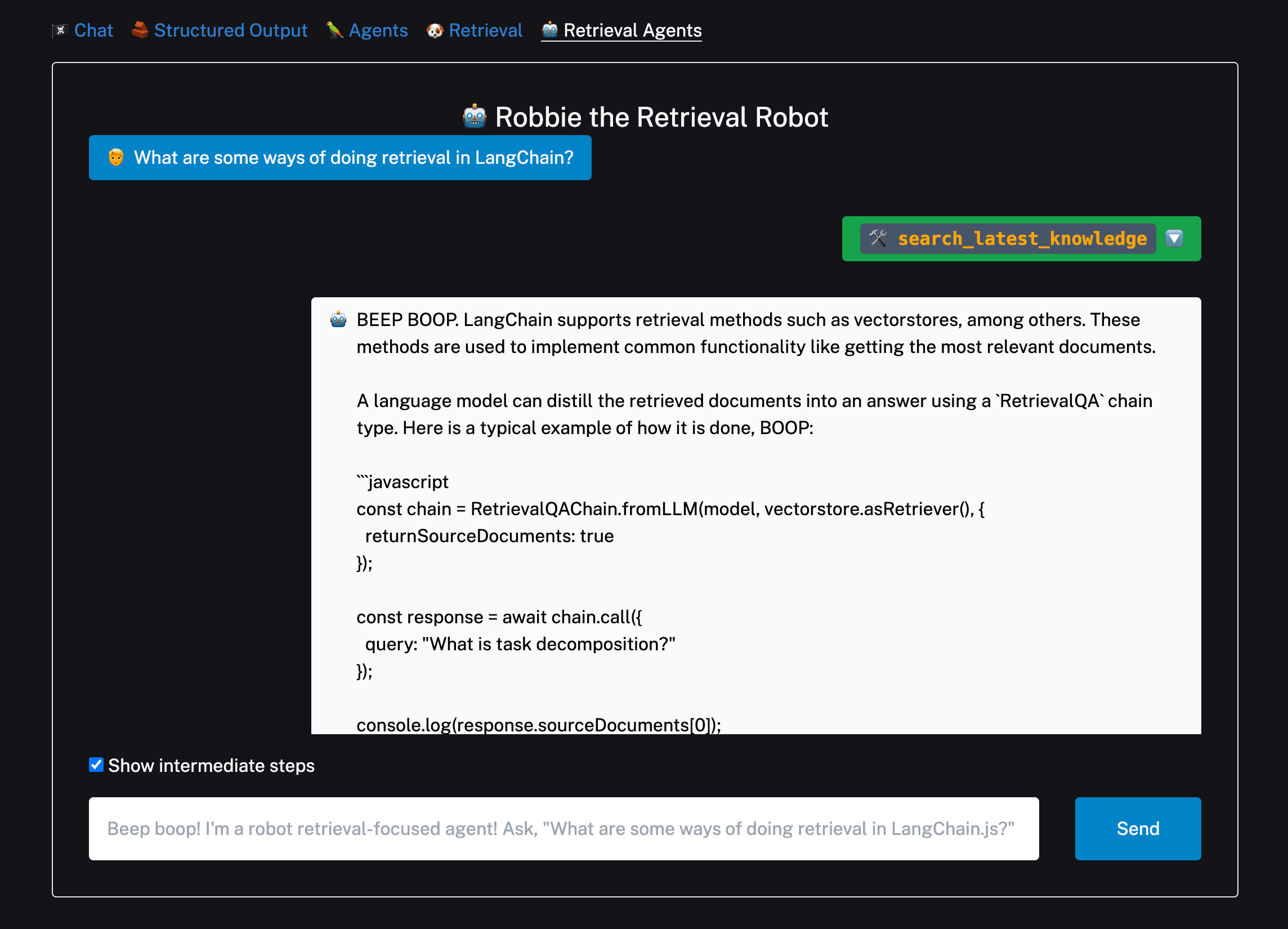

You can then switch to the Retrieval and Retrieval Agent examples. The default document text is pulled from the LangChain.js retrieval use case docs, but you can change them to whatever text you'd like.

For a given text, you'll only need to press Upload once. Pressing it again will re-ingest the docs, resulting in duplicates. You can clear your Supabase vector store by navigating to the console and running DELETE FROM docuemnts;.

After splitting, embedding, and uploading some text, you're ready to ask questions!

For more info on retrieval chains, see this page. The specific variant of the conversational retrieval chain used here is composed using LangChain Expression Language, which you can read more about here.

You can learn more about retrieval agents here.

Harnessing the capabilities of LangChain and Next.js can revolutionize your web applications, making them more interactive, intelligent, and user-friendly. This guide provides a comprehensive overview of setting up and deploying your AI-integrated application.

Try it out for yourself by deploying the Langchain + Next.js starter template to Vercel today.