6 min read

Unify API access, track costs, and secure credentials with a centralized gateway layer

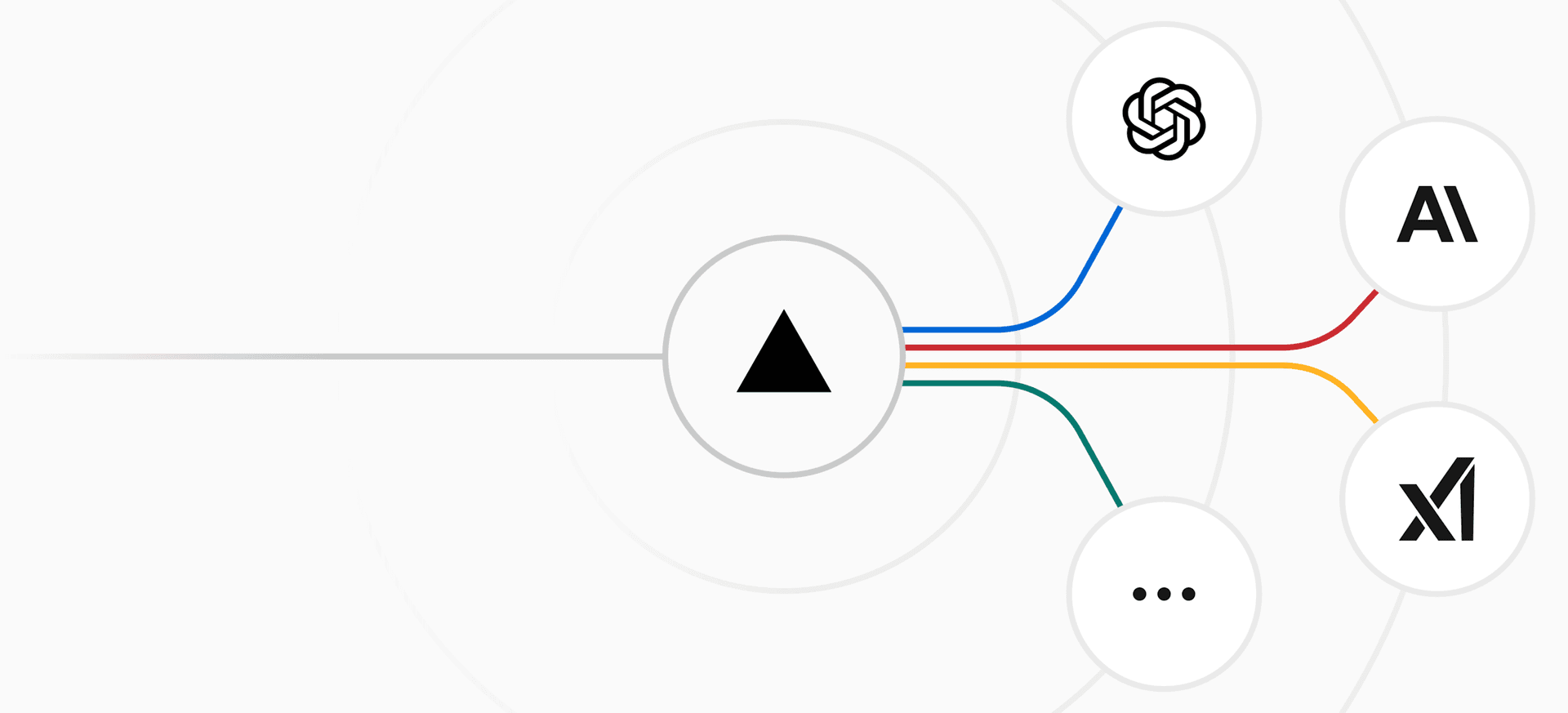

LLM gateways solve three problems that look separate but share one cause: fragmented visibility. When model requests go directly to multiple providers, you can track individual provider costs, but you cannot easily aggregate spending across teams, manage credentials centrally, or automatically route around outages. A gateway addresses this fragmentation because it sits in position to see every request.

The value isn't in any single feature. It's in what centralization enables. Cost tracking works because the gateway meters what it routes. Security works because credentials live in one place. Failover works because of existing integrations with multiple LLM providers. Teams adopt gateways when billing fragmentation, key sprawl, and reliability gaps surface.

This article explains why access, cost, and security converge in one layer, how request routing creates that convergence, and what changes when you view your gateway as more than a convenience and start managing it as infrastructure that accelerates AI projects and makes instability manageable.

Link to headingWhat is an LLM gateway?

An LLM gateway is a routing layer that sits between your application and model providers. It normalizes requests across providers while centralizing operational controls. Every model request your application makes passes through the gateway gets translated to the target provider's format, and returns as a normalized response.

Most teams reach the same point: you outgrow a single provider. You add Claude alongside GPT. You test Gemini for specific tasks. You experiment with open source models for cost-sensitive workloads. The gateway handles translation so your application code doesn't accumulate provider-specific code paths.

While translation is essential, it's what an LLM gateway's centralization enables that creates value. The gateway's structural position (seeing every request) enables everything else: cost attribution works because you meter what you route, policy enforcement works because you intercept every request, failover works because you already integrate with multiple providers, and security works because credentials live in one place.

Vercel's AI Gateway is a service for connecting to hundreds of AI models through a single interface. It is built on Fluid compute, the same runtime powering Vercel Functions. In the first month, AI Gateway handled roughly 16,000 total runtime hours, but only 1,200 of those hours involved actual CPU work. The remaining 14,800 hours were spent waiting for AI providers to respond. That ratio is why Fluid's Active CPU Pricing matters: you pay CPU rates for less than 8% of runtime instead of 100%.

Link to headingWhy most AI integrations break under load

Building with LLMs without a gateway layer typically means building against a provider's API directly. That works until it doesn't. Provider outages, rate limits, and pricing changes become your problem to handle ad hoc. The failure modes aren't theoretical.

The core issue is observability. Without a centralized layer, you can't easily answer basic operational questions about which team is generating the most tokens, which model is causing latency spikes, or which features break when a provider goes down. The answers live in scattered logs, billing dashboards, and provider-specific APIs.

A gateway shifts that. Because every request flows through a single layer, you get a consistent view across providers, models, and teams. That visibility is what makes cost attribution, policy enforcement, and failover tractable.

Link to headingRequest lifecycle through a gateway

Understanding the request path clarifies what a gateway can and can't do.

Every stage where the gateway intervenes is a place where you get control without changing application code. The application sends one format and receives one format regardless of which provider handled the request.

Link to headingCost attribution across providers

Token costs vary significantly across providers and models. GPT-4o, Claude Sonnet, and Gemini Flash have different pricing structures, and which model handles a given request affects your bill. Without routing through a central layer, attributing those costs back to specific teams, features, or environments requires joining data from multiple sources.

A gateway meters at the point of routing. Every request carries context (team, project, environment) and the gateway records token consumption against that context. The result is cost data that matches how your engineering organization and app are structured, not how your billing provider happens to group it.

Vercel's AI Gateway exposes token spend through its observability features. You get per-model, per-project cost tracking without instrumenting individual API calls. The data is already there because the gateway saw every request.

Link to headingCentralized credential management

Provider credentials are operationally risky when distributed. When API keys live in environment variables across dozens of services, rotation becomes a coordination problem. A compromised key in one service can affect unrelated workloads. Auditing which services have access to which providers requires checking configuration across the entire codebase.

A gateway inverts this. Application code authenticates to the gateway, and the gateway authenticates to providers. Provider credentials exist in one place. Rotation happens once. Audit logs show exactly which gateway requests hit which provider.

For teams on Vercel, applications authenticate to AI Gateway using OIDC tokens rather than static API keys. The gateway injects provider credentials at routing time. Applications never see the underlying provider keys. You also have the option to bring your own provider keys to the gateway if you prefer to manage credentials directly, giving you flexibility in how you handle authentication.

Link to headingFailover and reliability

LLM providers have outages. Rate limits get hit. Latency can spike without warning. Applications that call providers directly absorb these failures as errors. A gateway can absorb them as routing decisions.

The mechanism is straightforward: when a provider call fails or degrades past a threshold, the gateway routes the same request to a different provider. From the application's perspective, the request succeeded. The failure is handled at the infrastructure layer without code changes.

This works because the gateway already maintains integrations with multiple providers. Adding failover is a routing policy change, not an integration project.

Vercel AI Gateway routes across providers automatically. You configure primary and fallback providers per model type or per project, and the gateway handles the rest.

Link to headingUnified access with AI SDK

For teams building with JavaScript or TypeScript, AI SDK provides a provider-agnostic interface that pairs directly with AI Gateway. The SDK abstracts provider differences at the code level; the gateway abstracts them at the infrastructure level. Together they give you a consistent programming model and a consistent operational model.

Switching models in your code looks like changing a string:

import { generateText } from 'ai';

const { text } = await generateText({ model: 'anthropic/claude-sonnet-4', prompt: 'Explain how request routing works',});Changing 'anthropic/claude-sonnet-4' to 'openai/gpt-4o' routes to a different provider without touching anything else. The gateway handles credential injection, request normalization, and response translation transparently.

Link to headingWhen you should implement an AI gateway

Implementing a gateway early is a strategic decision for developer velocity, not just a reaction to operational pain. Even if you only use a single provider today, routing through a gateway decouples your application code from specific APIs. This removes friction from future experimentation. When you need to test a new model or migrate to a different provider, you update a routing configuration instead of rewriting application logic.

As your team and product scale, operational challenges naturally emerge. Multiple teams sharing AI infrastructure creates a need for cost attribution. Key rotation across dozens of services turns into a security burden. Provider reliability issues start affecting your product SLAs.

When you already have a gateway in place, you get the solutions to these scaling problems for free. Because the routing layer intercepts every request, you can enable cost tracking, centralized credentials, and automated failover without changing your architecture. That is the structural argument for treating the gateway as infrastructure from the beginning.

As Guillermo Rauch has noted, AI agents work best when there is a reliable dispatcher layer beneath them. The gateway is that layer. It does not change what your agents do, but it makes the infrastructure they depend on predictable.

Link to headingGetting started

Vercel AI Gateway is available on all plans. You can connect to it through AI SDK or directly via API.

Request a demo or get started on Vercel to explore AI Gateway for your team.