Organizations that prototype faster ship faster. If a picture is worth a thousand words, an interactive prototype is worth a thousand meetings.

AI-powered prototyping tools reduce the time it takes for engineering, product, and design to align on what to build, while giving teams flexibility to explore more ideas and gather customer feedback before committing to ship.

We've partnered with customers who cut prototyping time by as much as 75% after adopting these tools, but most AI pilots stall during the POC phase before they get there.

This guide shows you how to set up a pilot that succeeds by defining requirements, measuring outcomes, and rolling things out without losing momentum to internal friction.

The 75% improvement we've seen comes from testing with real projects and real workflows, not toy demos. Before you run a pilot, define what success looks like for your team.

Start by figuring out what your team actually needs from a tool. Common categories include:

- Security: Can you opt out of data training? Does the vendor have the certifications you need to access internal systems?

- Usability: What does the path from idea to usable prototype look like? How much onboarding is acceptable?

- Prototype fidelity: Not every prototype needs pixel-perfect design. At what point does higher fidelity actually change decisions for your team?

- Collaboration: Do you need SSO and role-based access so teams can share prototypes safely?

- Integration: Which APIs, services, or data sources must prototypes connect to?

- Change appetite: How much disruption can your existing developer workflows tolerate?

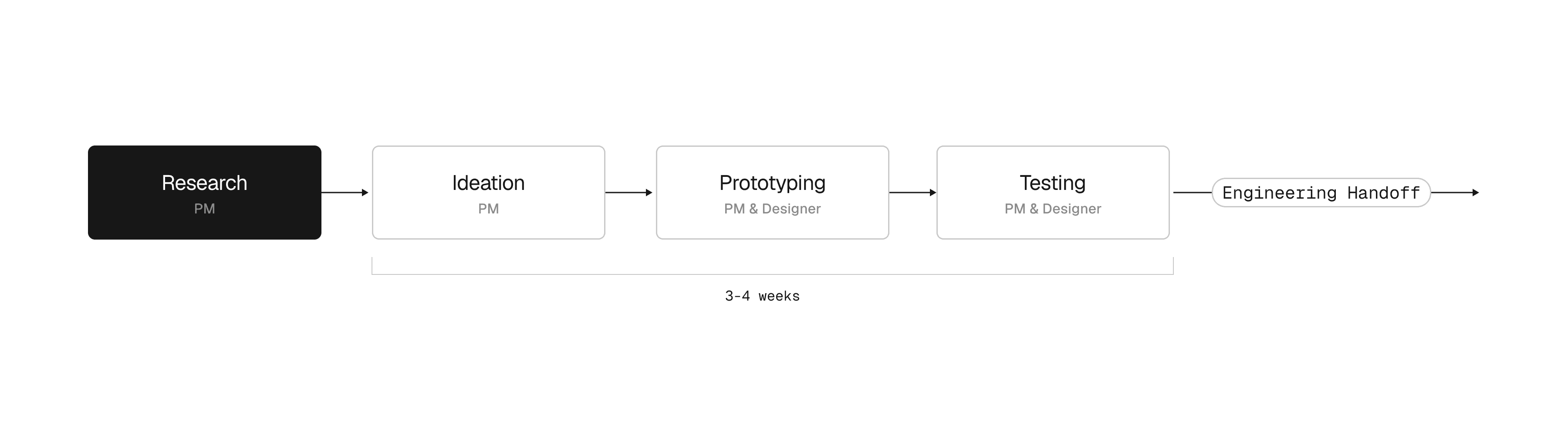

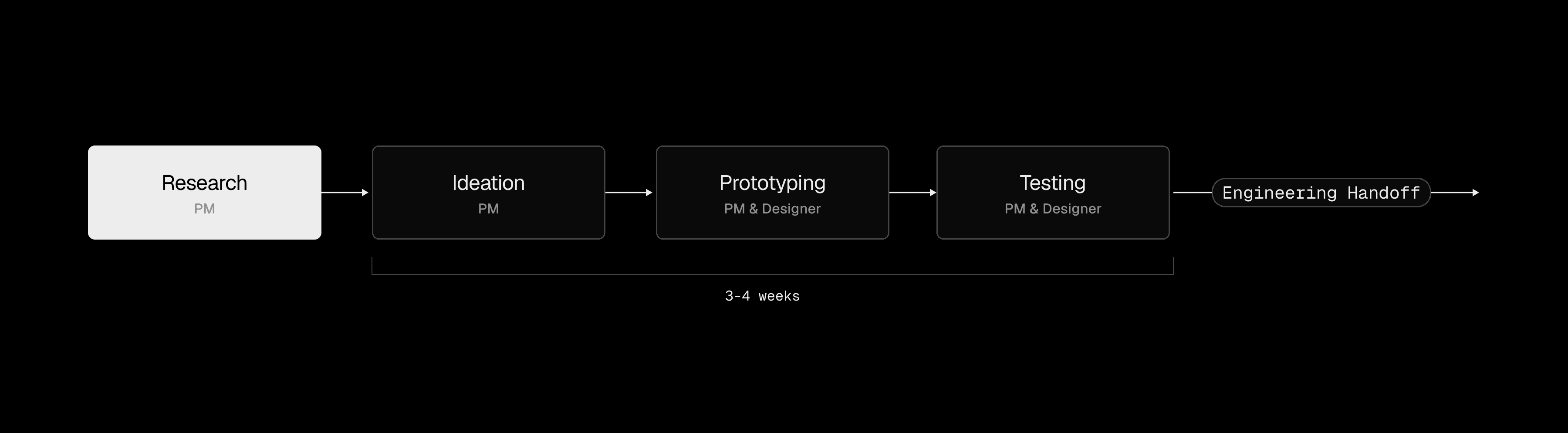

Next, map how your team actually builds things today:

- Who is involved at each stage

- What actions they perform

- Why each step exists

- Which tools are used

- How long each step takes

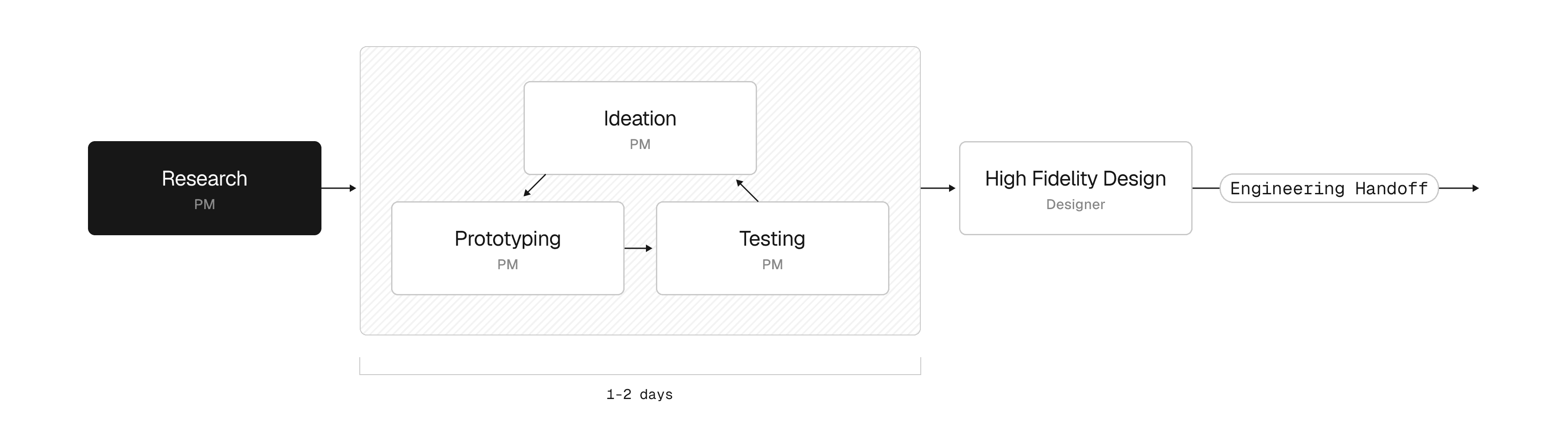

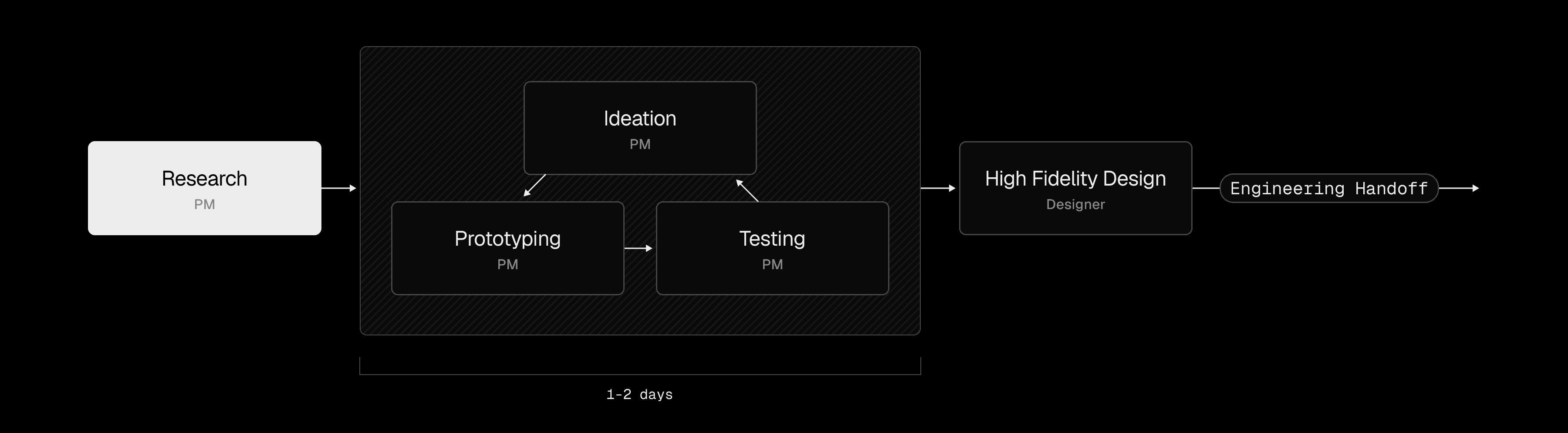

AI prototyping tools compress this timeline by letting teams run ideation, prototyping, and testing in parallel instead of sequentially.

Many teams also run surveys to understand where things break down. Surveys surface pain points and gaps in current capabilities that process docs tend to miss.

Once you know where the friction is, turn those pain points into metrics you can actually measure during the pilot. For prototyping, what matters most is efficiency and iteration velocity:

- Time from idea to validated prototype

- Number of prototypes your team can produce in a sprint

- How often prototypes survive first contact with users or stakeholders

- Reduction in designer or engineer hours spent on prototype work

When you can tie these back to business results, it's much easier to make the case for rolling the tool out more broadly.

Change management is the top reason AI pilots fail. We recommend a two-phase approach that builds internal expertise before expanding access. Plan for roughly 4-6 weeks total, starting with a 1-2 week focused POC with a small group, followed by a 2-4 week expanded trial.

Run a focused 1-2 week POC or hackathon with a small group of high-impact users. The goal is to show what a well-supported team can actually accomplish with the tool in a short window.

During this phase, work closely with the cohort to:

- Gather real use cases from participants' actual roadmaps, not hypothetical exercises

- Onboard participants through expert-led workshops

- Configure the tool to produce on-brand prototypes

- Create reusable prototyping templates that the broader team can adopt later

If phase one shows positive results, expand access. By this point, your pilot group should have enough expertise to help onboard others. This is also when you want department leads or VPs involved to help drive adoption. Work with your prototyping provider to design a rollout that includes:

- Kickoff workshops or hackathons to build momentum

- Weekly use case assignments to keep engagement high (open-ended office hours tend to see attendance drop off)

- Usage monitoring and executive updates to give leadership visibility into progress against trial metrics

We also recommend celebrating early adopters by having them showcase their work during the kickoff workshops. When people see a coworker build something real, it demystifies the process and the tool stops feeling intimidating.

As the trial wraps, gather metrics and compare against the success criteria you defined earlier. Measure impact both overall and for each phase.

If phase one outperforms phase two, that's expected. Phase one is your best-case scenario with a hand-picked, motivated group. Phase two shows you where the rest of the organization stands and where there's room to improve.

We've run this playbook with teams including Stripe and Stanley Black & Decker. The pattern is consistent. Start small, measure against real workflows, and expand once the first cohort proves the value. At Vanta, for example, every PM now has a v0 license and even non-technical PMs are building interactive prototypes and putting them in front of customers on their own.

For teams considering building internally, we've open-sourced and commercialized several of v0's foundational components. Whether you're ready to run a pilot or still deciding between build and buy, we can help you figure out the right approach.